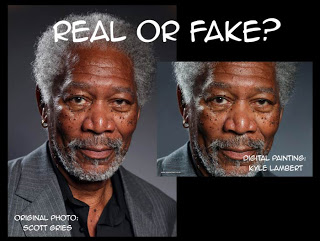

If you’re into digital art, and if you haven’t been hiding in a cave for the last week, you will have seen the hyper-realistic “finger painting” of actor Morgan Freeman.

Apparently artist Kyle Lambert, using Procreate software, created a finger-painting on an iPad that is so realistic it is indistinguishable from the celebrity photo it was copied from.

It’s all over social media, and even mainstream news, with the obvious question now being “Real or Fake?“.

Not one to turn my back on a controversy, I just have to bite. I can’t help it, it’s in my genes. And yes, this will be long.

EDIT: The short version:

I think this is probably real except that, perhaps, Photoshop was used in some way during the process, if only in a supporting role. I certainly don’t see it as impossible – assuming the hardware and software are technically capable.

Read on if you want the long version…

Before I begin, let me say that I don’t own an iPad. I thought about getting one as a handy-dandy, full-colour sketch tool but everything I’ve read about them suggests that painting on one is slightly less gratifying than shoving an old kindergarten crayon around with your foot.

Well, perhaps not that bad but the device works on finger gestures so, unless you-re blessed with literally a handful of pressure-sensitive needle-point digits, it’s hardly a precision instrument (yes, there are pens available, but the claim being made here is about finger-painting). Of course, as with all technologies, improvements are made with each generation and developers continue to create better software solutions to exploit any benefits the device might offer. When it comes to the digital world, people should have learned a long time ago not to make blanket statements about what’s not possible.

So maybe I need to get myself updated on what can be done with an iPad these days.

Promoters of the Morgan Freeman “finger painting” would have us believe that the iPad’s time has arrived and that, for less than $7, and a 54Mb download, you could have a painting tool that rivals Photoshop.

But can you really do this with your fingers?

The jury may still be out on how Lambert actually created the piece that’s being touted as an amazingly life-like finger-painting, but the evidence against it being done entirely in one cheap app on an iPad is compelling.

The image is so accurate there are suggestions around social media that the artist simply started with the original photo by Scott Gries, and “unpainted” it, slowly reducing detail and deleting colour until there was nothing left, and then reversed the video to make it look like it was built from scratch.

While that seems feasible, I don’t think it’s necessarily the only way this could have been done (update: after some more thought, I actually think this would be no easier than just painting from scratch). Plus, despite claims by some detractors, the Lambert image is not a pixel-perfect copy of the original photo.

When overlaid at full-size in Photoshop, there are notable shifts when the top layer is switched on and off. The most noticeable deformities are around the subject’s right eye (you can just see it by using the slider in the small image at this blog). That said, the finger-painting is as good as 100% accurate at the macro level.

But this is a digital image and that opens up other possibilities that don’t entirely dismiss the claim that it was created from scratch. For example, with enough zoom, down to pixel level, it would be possible to simply copy every pixel, one at a time, from start to finish. It would be time consuming but at a finished size 740,000 pixels in total, and if my maths is right, it would take around 600 hours to complete at the rate of one pixel every few seconds.

Note that large areas of the background could easily be filled in single passes and adjoining areas blended with painting tools. That’s probably hundreds of thousands of pixels that don’t have to be painted individually. This makes the author’s claim of 200 hours work look like a very distant possibility, even one pixel at a time. No one’s claiming it was done that way, and the video suggests otherwise, but it demonstrates the possibility of completing the piece from scratch within a realistic time frame.

Some people seem gobsmacked that it’s even possible to paint photo-realistically, but I am not so dismissive of the notion that the image could have been created from scratch. Chuck Close was doing it 30 years ago – with real paint! I even dabbled myself, back in my airbrushing days.

Adding detail to an image is trivial, if time-consuming, in the digital age. Even my simple caricatures of Tony Abbott and Wilson Tuckey have some level of “realistic” detail such as pores and sweat beads, and they were completed in around 10 hours each, including the underlying caricaturing process. And they were done from scratch with no tracing, no photo manipulation and no photo sampling. Plus, caricaturing and digital painting were very new to me when I did those. There are people out there with much better Photoshop skills than I possess. Imagine what they could do with a high-quality source photo and 200 spare hours. In fact, you may not need to imagine it, Kyle Lambert may well have demonstrated it.

I think it’s possible. I don’t even think it’s difficult. Boring perhaps. Mind-numbingly boring. But possible, with time.

One area of concern is that the video of the painting process shows major features being placed early and never substantially adjusted as the painting progressed, which would suggest the image was traced – but the original photo is not shown. If the photo wasn’t traced in any way, it’s an astonishing achievement that would likely have James Randi waving a million dollars around for a repeat performance under controlled conditions – and a lot of social media commentators eating their hats. Unless adjustments disappear when 200 hours of painting is squeezed into a few minutes of video.

So, was it traced?

Lambert told Gizmodo “…at no stage was the original photograph on my iPad or inside the Procreate app. Procreate documents the entire painting process, so even if I wanted to import a photo layer it would have shown in the video export from the app.”

Forgive me for thinking that answer would be right at home in any parliament, anywhere in the world. It is sufficiently vague as to be of little use at all.

Where was the original photograph? How was it used?

Is it possible, for example, to plug the iPad into a computer and have the iPad screen image overlay an image in Photoshop. This way you could effectively use the iPad as a Cintiq-style drawing tablet whilst watching the tracing action on the computer monitor. Doing it this way, both claims – that the image was painted on the iPad and that the original photo was never on there – could be true.

But is this possible? I don’t have an iPad to try it out so I don’t know.

One thing’s for sure, if this is possible, it might answer some of the questions being asked about the finger-painting image’s metadata, which appears only to list Photoshop as the software used in its creation and which also, apparently, lists metadata from the Gries photo in the history of the image’s creation. Was the Gries photo in Photoshop, being traced, and were Procreate layers transferred to Photoshop as painting progressed?

When I create detailed colour caricatures in Photoshop, I tend to use quite a few layers. I can’t imagine how many layers I’d use to reach the level of detail we’re talking about here. 295 maybe?

I’ll reserve my opinion on how this was done but it does seem, from looking at the metadata alone (first created in Photoshop CS5, Mac, in 2011), that there’s much more to this story than we’re being told from those in the know. I don’t necessarily think anyone’s lying, but there might be a few important details that haven’t been mentioned – yet.

Although, the claim on the Youtube channel where the video is posted does say the image was created using “only a finger, an iPad Air and the app Procreate” – and I think there is some plausible doubt about that.

If this sort of thing interests you at all then you may also like to read more about the metadata at Sebastian’s Drawings and The Hacker Factor Blog. Though I should say that while I find their information interesting, I don’t entirely agree with the conclusion about this being merely a manipulated photo – unpainted and played back in reverse. But it will explain why I think it could take 295 layers to get the job done properly.

Does it even matter?

I suppose it only matters if you are compelled, on the basis of this news, to buy something that might not quite be capable of doing what you thought it could do. But if the image was actually produced on the iPad, despite contradictory evidence in the metadata, and if it was done in one app using only fingers, then the software is clearly more capable than a kindergarten crayon.

If this level of control and detail is now possible – with fingers – that would be a story even if we knew exactly how the results were achieved.

If this can be done on an iPad with a piece of $7 software and a finger, I’d consider buying an iPad tomorrow. But for me, there are too many questions that need to be answered and I’m not about to buy an iPad to find the answers.

Here is your answer:

http://www.sebastiansdrawings.com/post/69093322933/please-dont-cheapen-genuine-artists

Thanks Anon. That's the link I provided in the article.

In a previous article, Sebastian insists…

"The only way this could be done, is if you start with a photo and then paint over it, blurring out details. And then replay the video backwards."

I'm not convinced that's the case. But the presence of the original file hash in the metadata does raise questions.

This comment has been removed by a blog administrator.

Okay Anonymous, I get that you think the video is a fake. Bit there's no need for the language so I've deleted the comment. Feel free to re-comment without the language but please, be very careful of any libel you might be tempted to throw around or I might be tempted to delete the comment again.

For the record, I acknowledged in the article the amazing accuracy of the progress shown in the video and concluded that, as far as I'm concerned, it was almost certainly traced somehow. Minor colour corrections would be unlikely to show in a video where 200 hours is compressed into a few minutes so I'm not too fussed about that issue.

I also agree that if this was part of a marketing strategy, it appears to have been very successful – but that doesn't mean the claim is 100% false.

There is only one problem with this whole thing (I am an iPad artist also) The app Procreate itself did not offer video playback until recently to keep up with the app sketch club. At the time of this Morgan Freedman creation he would have to use an external device to video the process and thus could cheat. I have followed his amazing work also but this painting came way before The app Procreate had video playback as an option . So it could have been done over a photo back then with a external video camera used focused on the iPad . Just a thought his work is great though.